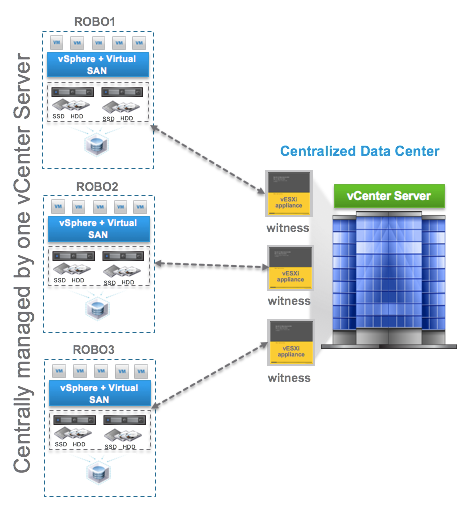

I continue to think one of the hidden gem features of VMware Virtual SAN (VSAN) is its software defined self healing ability. I recently received a request for a description of 2-Node self healing. I wrote about our self healing capabilities for 3-Node, 4-Node and more here. And I wrote about Virtual SAN 6 Rack Awareness Software Defined Self Healing with Failure Domains here. I suggest you check out both before reading the rest of this. I also suggest you check out these two posts on 2-Node VSAN for a description on how they work here and are licensed here.

For VSAN, protection levels can be defined through VMware’s Storage Policy Based Management (SPBM) which is built into vSphere and managed through vCenter. VM objects can be assigned to different policy which dictates the protection level they receive on VSAN. With a 2-Node Virtual SAN there is only one option for protection, which is the default # Failures To Tolerate (#FTT) equal to 1 using RAID1 mirroring. In other words, each VM will write to both hosts, if one fails, the data exists on the other host and is accessible as long as the VSAN Witness VM is available.

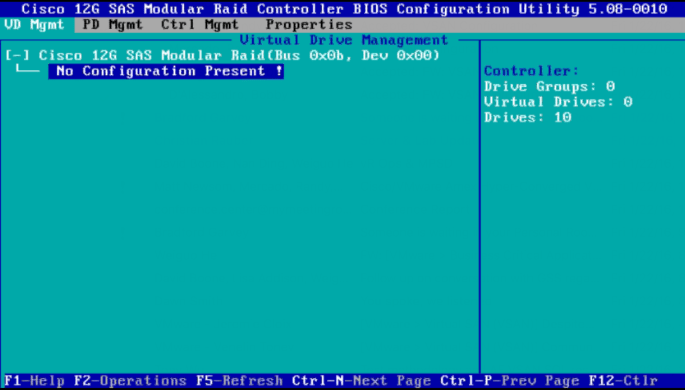

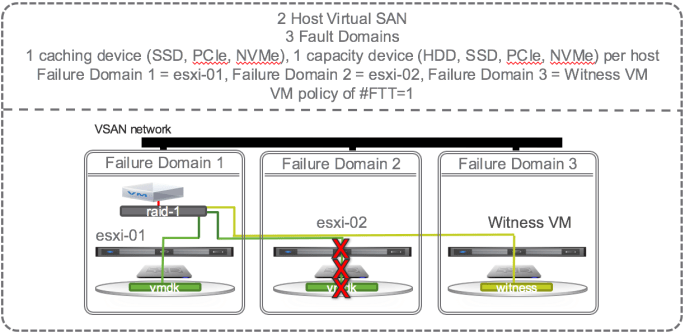

Now that we support 2-Node VSAN, the smallest VSAN configuration possible is 2 physical nodes with 1 caching device (SSD, PCIe, or NVMe) and 1 capacity device (HDD, SSD, PCIe, or NVMe) each and one virtual node (VSAN Witness VM) to hold all the witness components. Let’s focus on a single VM with the default # Failures To Tolerate (#FTT) equal to 1. A VM has at least 3 objects (namespace, swap, vmdk). Each object has at least 3 components (data mirror 1, data mirror 2, witness) to satisfy #FTT=1. Lets just focus on the vmdk object and say that the VM sits on host 1 with mirror components of its vmdk data on host 1 and 2 and the witness component on the virtual Witness VM (host 3).

OK, lets start causing some trouble. With the default # Failures To Tolerate equal 1, VM data on VSAN should be available if a single caching device, a single capacity device, or an entire host fails. If a single capacity device fails, lets say the one on esxi-02, no problem, another copy of the vmdk is available on esxi-01 and the witness is available on the Witness VM so all is good. There is no outage, no downtime, VSAN has tolerated 1 failure causing loss of one mirror, and VSAN is doing its job per the defined policy and providing access to the remaining mirror copy of data. Each object has more that 50% of its components available (one mirror and witness are 2 out of 3 i.e. 66% of the components available) so data will continue to be available unless there is a 2nd failure of either the caching device, capacity device, or esxi-01 host. The situation is the same if the caching device on esxi-02 fails or the whole host esxi-02 fails. VM data on VSAN would still be available and accessible. If the VM happened to be running on esxi-02 then HA would fail it over to esxi-01 and data would be available. In this configuration, there is no automatic self healing because there’s no where to self heal to. Host esxi-02 would need to be repaired or replaced in order for self healing to kick in and get back to compliance with both mirrors and witness components available.

Self healing upon repair

How can we get back to the point where we are able to tolerate another failure? We must repair or replace the failed caching device, capacity device, or failed host. Once repaired or replaced, data will resync, and the VSAN Datastore will be back to compliance where it could then tolerate one failure. With this minimum VSAN configuration, self healing happens only when the failed component is repaired or replaced.

2-Node VSAN Self Healing Within Hosts and Across Cluster

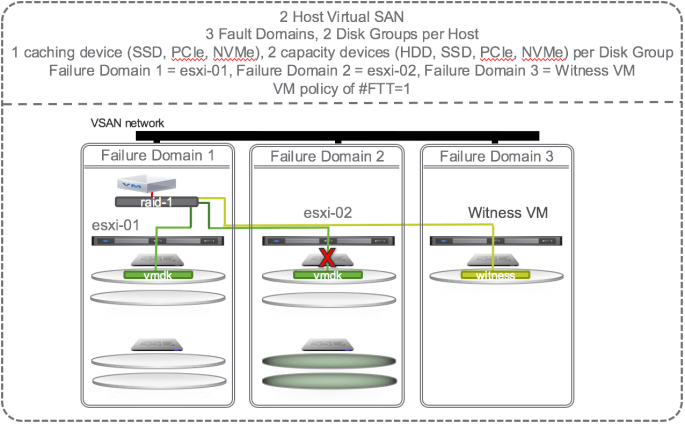

To get self healing within hosts and across the hosts in the cluster you must configure your hosts with more disks. Let’s investigate what happens when there are 2 SSD and 4 HDD per host and 4 hosts in a cluster and the policy is set to # Failures To Tolerate equal 1 using the RAID 1 (mirroring) protection method.

If one of the capacity devices on esxi-02 fails then VSAN could chose to self heal to:

- Other disks in the same disk group

- Other disks on other disk groups on the same host

The green disks in the diagram below are eligible targets for the new instant mirror copy of the vmdk:

This is not an all encompassing and thorough explanation of all the possible scenarios. There are dependencies on how large the vmdk is, how much spare capacity is available on the disks, and other factors. But, this should give you a good idea of how failures are tolerated and how self healing can kick in to get back to policy compliance.

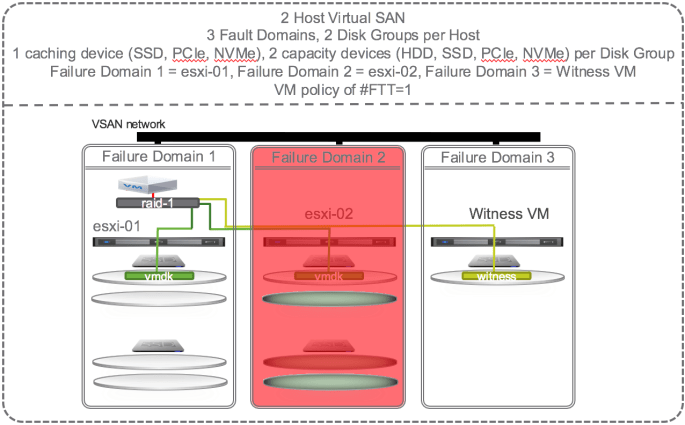

Self Healing When SSD Fails

If there is a failure of the caching device on esxi-02 that supports the capacity devices that contain the mirror copy of the vmdk then VSAN could chose to self heal to:

- Other disks on other disk groups on the same host

- Other disks on other disk groups on other hosts.

The green disks in the diagram below are eligible targets for the new instant mirror of the vmdk:

Self Healing When a Host Fails

If there is a failure of a host (e.g. esxi-02) that supports mirror of the vmdk then VSAN cannot self heal until the host is repaired or replaced.

Summary

VMware Virtual SAN leverages all the disks on all the hosts in the VSAN datastore to self heal. Note that I’ve only discussed above the self healing behavior of one VM but other VM’s on other hosts may have data on the same failed disk(s) but their mirror may be on different disks in the cluster and VSAN might choose to self heal to other different disks in the cluster. Thus the self healing workload is a many-to-many operation and thus spread around all the disks in the VSAN datastore.

Self healing is enabled by default, behavior is dependent on the software defined protection policy (#FTT setting), and can occur to disks in the same disk group, to other disk groups on the same host, or to other disks on other hosts. The availability and self healing properties make VSAN a robust storage solution for all data center applications.