Encryption is here, now shipping with vSphere 6.5.

I first wrote about vSAN and Encryption here:

Virtual SAN and Data-At-Rest Encryption – https://livevirtually.net/2015/10/21/virtual-san-and-data-at-rest-encryption/

At the time, I knew what was coming but couldn’t say. Also, the vSAN team had plans that changed. So, let’s set the record straight.

vSAN

- Does not support Self Encrypting Drives (SEDs) with encryption enabled.

- Does not support controller based encryption.

- Supports 3rd party software based encryption solutions like HyTrust DataControl and Dell EMC Cloud Link.

- Supports the VMware VM Encryption released with vSphere 6.5

- Will support its own VMware vSAN Encryption in a future release.

At VMworld 2016 in Barcelona VMware announced vSphere 6.5 and with it, VM Encryption. In the past, VMware relied on 3rd party encryption solutions, but now, VMware has its own. For more details, check out:

What’s new in vSphere 6.5: Security – https://blogs.vmware.com/vsphere/2016/10/whats-new-in-vsphere-6-5-security.html

In this, Mike Foley briefly highlights a few advantages of VM Encryption. Stay tuned for more from him on this topic.

In addition to what Mike highlighted, VM encryption is implemented using VAIO Filters, can be enabled per VM object (vmdk), will encrypt VM data no matter what storage solution is implemented (e.g. object, file, block using vendors like VMware vSAN, Dell Technologies, NetApp, IBM, HDS, etc.), and satisfies data-in-flight and data-at-rest encryption. The solution does not require SED’s so it works with all the commodity HDD, SSD, PCIe, and NVMe devices and integrates with several third party Key Management solutions. Since VM Encryption is set via policy, that policy could extended across to public clouds like Cloud Foundation on IBM SoftLayer, VMware Cloud on AWS, VMware vCloud Air or to any vCloud Air Network partner. This is great because your VM’s could live in the cloud but you will own and control the encryption keys. And you can use different keys for different VM’s.

At VMworld 2016 in Las Vegas VMware announced the upcoming vSAN Beta. For more details see:

Virtual SAN Beta – Register Today! – https://blogs.vmware.com/virtualblocks/2016/09/07/virtual-san-beta-register-today/

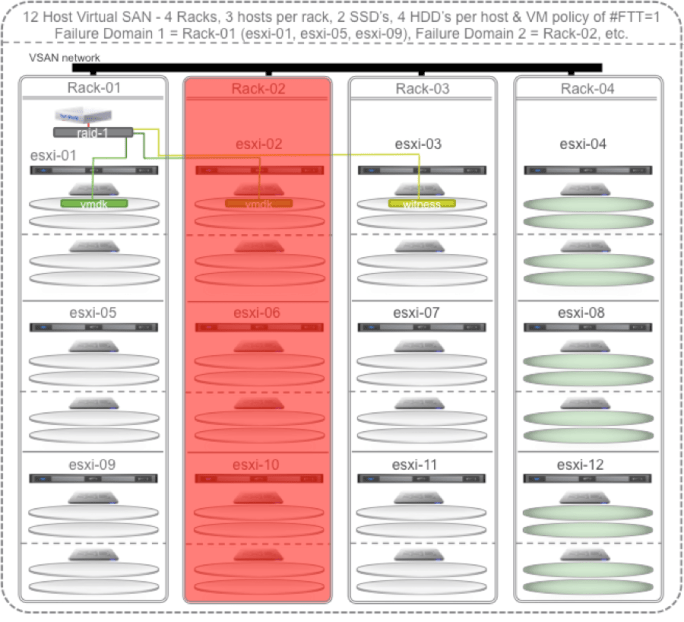

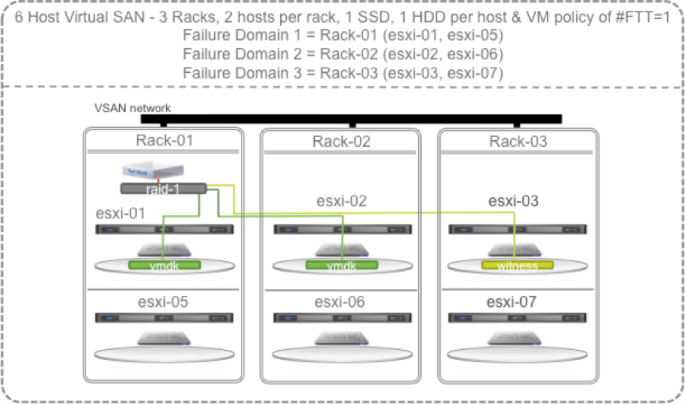

This vSAN Beta includes vSAN encryption targeted for a future release of vSphere. vSAN Encryption will satisfy data-at-rest encryption. You might ask why vSAN Encryption would be necessary if vSphere has VM Encryption? I will say that you should always look to use VM Encryption first. The one downside to VM Encryption is that since the VM’s data is encrypted as soon as it leaves the VM and hits the ESXi kernel, each block is unique, so no matter what storage system that data goes to (e.g. VMware vSAN, Dell Technologies, NetApp, IBM, HDS, etc.) that block can’t be deduped or compressed. The benefit of vSAN encryption will be that the encryption will be done at the vSAN level. Data will be send to the vSAN cache and encrypted at that tier. When it is later destaged, it will be decrypted, deduped, compressed, and encrypted when its written to the capacity tier. This satisfies the data-at-rest encryption requirements but not data-in-flight. It does allow you to take advantage of the vSAN dedupe and compression data services and it’s one key for the entire vSAN datastore.

It should be noted that both solutions will require a 3rd party Key Management Server (KMS) and the same one can be used for both VM Encryption and vSAN Encryption. The KMS must support the Key Management Interoperability Protocol (KMIP) 1.1 standard. There are many that do and VMware has tested a lot of them. We’ll soon be publishing a list, but for now, check with your KMS vendor or your VMware SE for details.

VMware is all about customer choice. So, we offer a number of software based encryption options depending on your requirements.

It’s worth restating that VM Encryption should be the standard for software based encryption for VM’s. After reviewing vSAN Encryption, some may choose it instead to go with vSAN encryption if they want to take advantage of deduplication and compression. Duncan Epping provides a little more detail here:

The difference between VM Encryption in vSphere 6.5 and vSAN encryption – http://www.yellow-bricks.com/2016/11/07/the-difference-between-vm-encryption-in-vsphere-6-5-and-vsan-encryption/

In summary:

- Use VM Encryption for Hybrid vSAN clusters

- Use VM Encryption on All-Flash if storage efficiency (dedupe/compression) is not critical

- Wait for vSAN native software data at rest encryption if you must have dedupe/compression on All-Flash