You’ve built your vSphere cluster with vSAN enabled, now what? Of course, you can start provisioning VM’s in the cluster and their vmdk’s onto the vSAN datastore. But, what if you want to move existing VM’s onto your new cluster? Well, there are several methods to consider, each with their own benefits and detractors. This topic has been explored a few times and here are some useful links:

Migrating VMs to vSAN

Migrating to vSAN

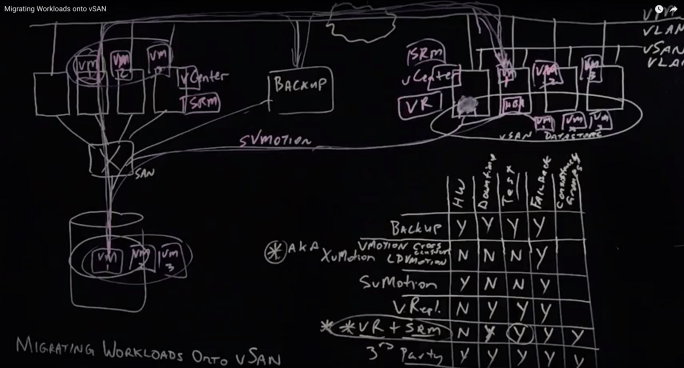

I had the opportunity to record an overview of this topic using our Lightboard technology at VMware headquarters in Palo Alto. You can check it out here:

Migrating Workloads onto vSAN

The video lightboard explores the following methods:

Backup

Simply, you can backup your VMs sitting in one cluster, shut them down, then restore them onto the new cluster.

Cross Cluster vMotion (AKA XvMotion), Cross vCenter vMotion, Long Distance vMotion (LDM)

You can migrate live VM’s from one cluster to another cluster (Cross cluster vMotion) and those clusters could be managed by different vCenters (Cross vCenter vMotion). This can be great for a few VM’s but if it’s a lot of VM’s and a lot of data then it can take a while. There’s no downtime for the VM’s, but, you could be waiting a long time for the migration to complete. For more details, see one of my previous posts:

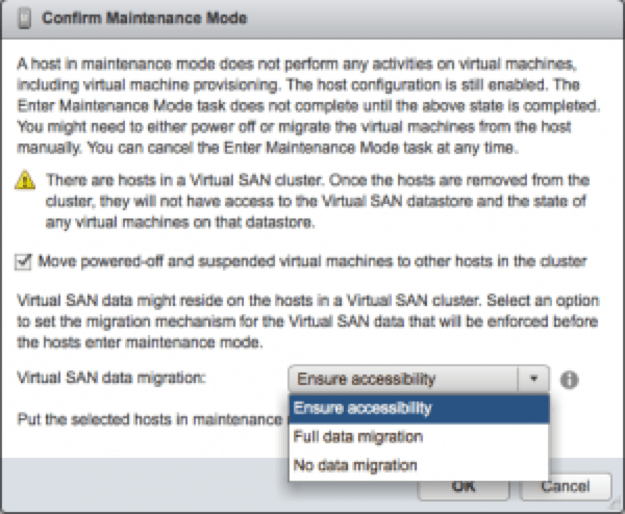

Storage vMotion

This is only possible if your source and destination hosts are connected to the same source storage system LUN/Volume. If so, you can have both clusters mount the same LUN/Volume and move the VM from the source cluster to the destination cluster and also move the data from the source datastore (LUN/Volume on SAN/NAS) to the destination datastore (vSAN). If you are moving off a traditional fibre channel SAN then you’ll need to put fibre channel HBA’s in the hosts supporting the new vSAN datastore.

VMware vSphere Replication

VMware’s vSphere Replication replicates any VM on one cluster to any other cluster. This host based replication feature is storage agnostic so it doesn’t matter what the underlying storage is on either cluster. A vSphere snapshot of the VM is taken and that snapshot is used as the source of the replication. Once you know the data is in sync between the source cluster and destination cluster you can shut down the VM’s in the source cluster and power them up in the destination cluster. So, there is downtime. If something doesn’t go right, you could revert back to the source cluster. Here’s a good whitepaper on vSphere Replication.

VMware vSphere Replication + Site Recovery Manager

VMware’s vSphere Replication replicates any VM on one cluster to any other cluster. VMware Site Recovery Manager allows you to test and validate the failover from the source to the destination. It allows you to script the order in which VM’s are powered on as well as Re-IP them if necessary and can automate running pre and post scripts if necessary. Once you validate the failover will happen as you want it to, you can do it for real knowing it’s been pretested. If something goes wrong it has a “revert” feature to reverse the cut-over and go back to the source cluster until you can fix the problem. Here are a few good whitepapers on Site Recovery Manager.

3rd Party Replication

DellEMC RP4VMs replicates data prior to cut over. Once you know the data is in sync between the source cluster and destination cluster you can shut down the VM’s in the source cluster and power them up in the destination cluster. So, there is downtime. If something doesn’t go right, you could revert back to the source cluster. There are other 3rd party options on the market including solutions from Zerto and Veeam.

What About VMware Cloud on AWS?

Since vSAN is the underlying storage on VMware Cloud on AWS, all the options above will work for migrating workloads from on Premises to VMware Cloud on AWS.

Summary

Personally, I like the ability to test the failover migration “cut over” using Site Recover Manager so I’d opt for the vSphere Replication + Site Recovery Manager option if possible. if it’s only a few VM’s and a small amount of data then XvMotion would be the way to go.