One of VMware’s guiding principles is to be a force for good (VMware 2030 Agenda). VMware’s impact to reducing CO2 emissions for customers worldwide has been well documented (VMware Global Impact Report 2020). But in addition to that, VMware’s force for good has enabled customer choice, by liberating organizations from physical constraints. For many years, that meant a choice in hardware to run or access applications. Now, VMware Cloud means customers have a choice to run any application in any cloud with a consistent experience.

From an IT perspective, applications are the center of the universe. For years, IT operational staff have worked to perfect building, running, and managing compute, network, and storage. But none of that would matter if there weren’t applications to run a business.

If there’s a SaaS offering that meets your business needs like Salesforce.com, Workday, and Coupa, then go for it!

For all other applications, the choice is to use a common off-the-shelf software package or build your own. Regardless, that application has to run somewhere, either in the public cloud or in your private data center cloud. There are many factors leading to that choice.

I’ve worked with a large worldwide bank who’s proven that with VMware they can build, run, and manage their own data centers more cost effectively than current big name public cloud providers.

I’ve worked with another financial services company who sees the need for AWS and Azure public clouds so they can burst capacity on demand because having infrastructure on standby is not economically feasible in their own private cloud. However, they need to maintain private clouds to meet the security and performance requirements of some applications. Thus, they require a hybrid cloud and multi cloud strategy.

As you can see, there’s no single clear answer to where applications should reside. That’s why VMware offers choice. One thing is clear, of the organizations VMware studied this year, 90% of executives are prioritizing migration and modernization of their legacy apps. VMware understands that businesses need a range of modernization strategies and the 5R’s of app modernization; Retain, Rehost, Replatform, Refactor, and Retire is designed to do just that.

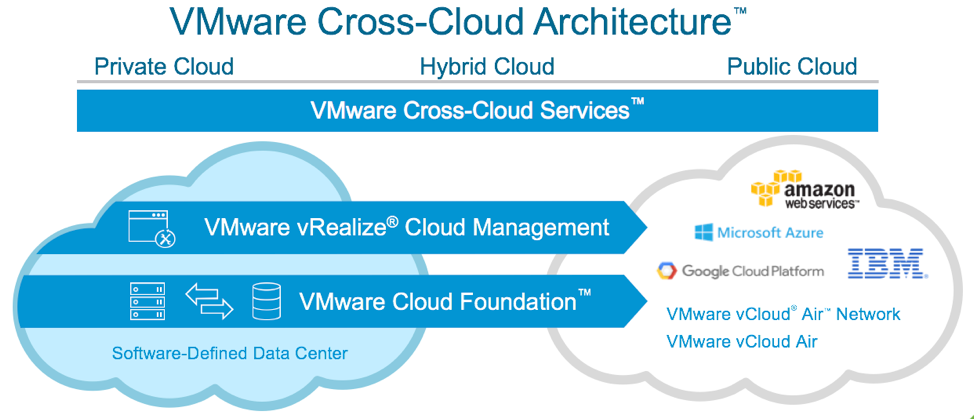

Retain – If applications must be Retained in a private cloud, then many companies have proven that with VMware Cloud Foundation and vRealize Suite, they can operate their own cloud to achieve the highest levels of performance, availability and efficiency and do it cost effectively, securely, and operationally simple.

Rehost/Migrate – Some customers are choosing to Rehost or Migrate their applications in a public cloud. The good news is that the same private cloud solution that has powered 85 million workloads for the most demanding businesses is available in over 4000 public clouds like VMware Cloud on AWS, Azure VMware Cloud, and 1000’s of our other cloud partners. Applications can be migrated instantly, without disruption or having to recode them and they can be secured and managed the same way as in their own private cloud. Once there, the native cloud service can be leveraged to add new functionality to existing apps.

Replatform – With vSphere 7, VMware brings native Kubernetes support to vSphere. This allows you to Replatform or repackage existing applications into containers and orchestrate them in Kubernetes. In other words, you can run, observe, and manage containers in the same way you manage VMs.

Refactor/Build – VMware has a long history of supporting open-source applications for millions of developers. With VMware Tanzu, developers can build new digital services for the future by

rewriting and Refactoring existing apps to cloud native architecture, Building new ones, deploying them quickly, and operating them seamlessly.

Retire – If you execute your application modernization strategy well, you’ll be able to Retire legacy applications that have been costly to maintain.

VMware believes the needs of your business and applications should drive your cloud strategy. VMware Cloud supports applications deployed across a range of private and public clouds that are unified with centralized management and operations and centralized governance and security.

VMware’s force for good maintains your choice for your applications.

For more information on today’s VMware Cloud announcements, check out: The Distributed, Multi-Cloud Era Has Arrived