I watched the streaming video of the VMworld 2015 Keynote this morning and there were plenty of highlights. In my mind and many others, Yanbing Li stole the show. Not only did she provide new and impressive announcements, she was funny too. And, she comes from the Storage and Availability Business Unit.

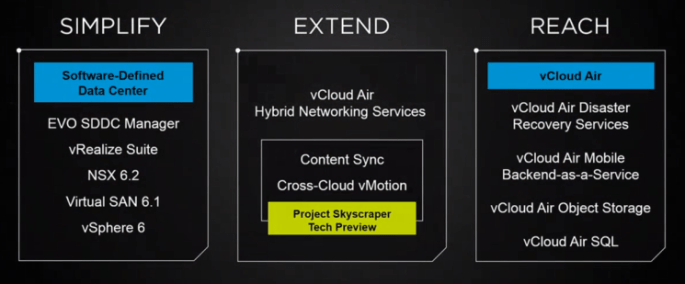

The Keynote began with somewhat cute application mascots escorting Carl Eschenbach on stage. Apps are the important, but, they have to run somewhere,… on infrastructure. Yanbing’s first message was that VMware is constantly working to Simplify infrastructure, which makes sense. Lets make infrastructure as simple as possible so that the focus can be put on the important things, the applications.

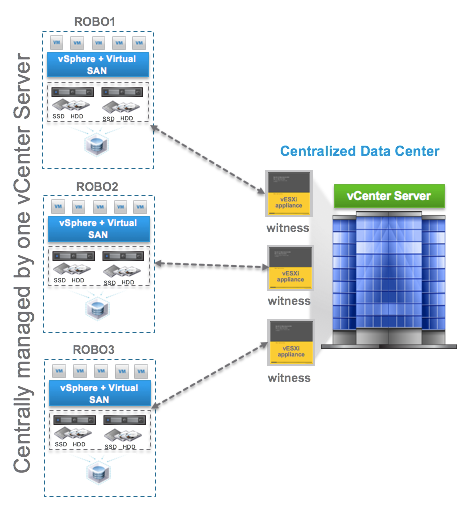

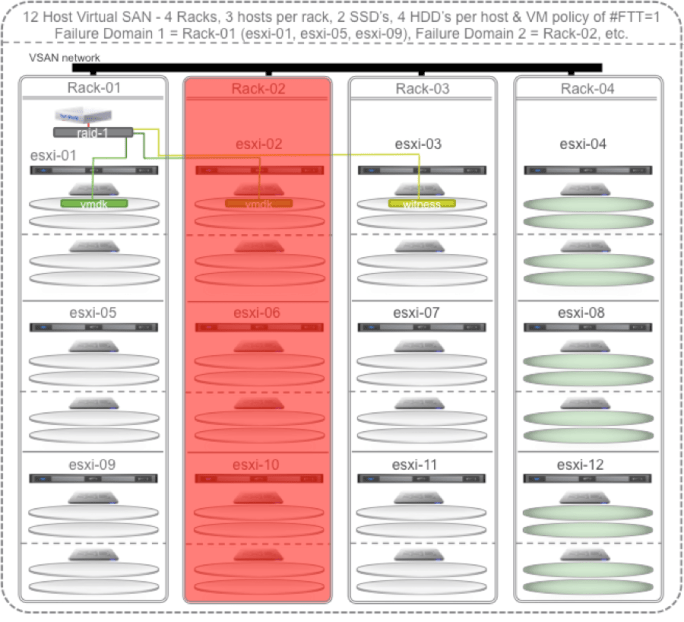

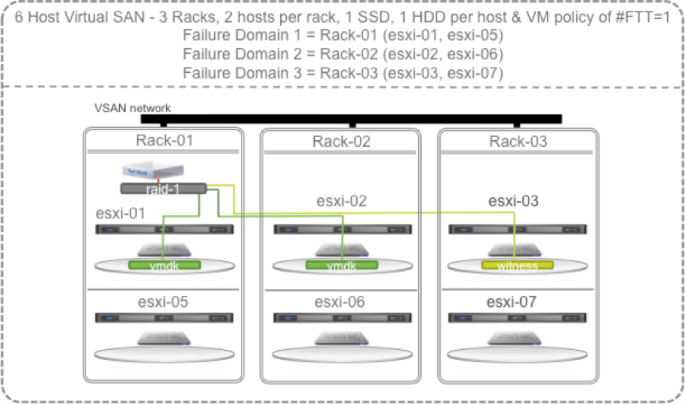

Yanbing discussed VMware’s infrastructure foundation being vSphere 6 for compute, NSX 6.2 for network, and for storage she stated “my personal favorite storage platform, Virtual SAN” 6.1. Of course there are lots of other storage choices out there and VASA, VVols, and SPBM aims to simplify the management of those, but if you are looking to build the simplest possibly physical infrastructure then Virtual SAN makes storage a simple extension of the capabilities of the vSphere cluster. Here’s more on “What is new for Virtual SAN 6.1?”.

Once the infrastructure is up and running, vRealize Suite simplifies management of it and the deployment of VM’s. All this infrastructure software has to run on hardware, and that hardware can be a challenge to setup. So, the big announcement is how EVO SDDC Manager will simplify the deployment of physical infrastructure. The same simplification benefits that EVO:RAIL brought will be extended to whole racks of hardware. Each rack will support 1000 VM’s or 2000 VDI’s and provide up to 2M IOPS of storage performance. Using the EVO SDDC Manager, multiple racks can be combined to create a virtual rack that can be divided up into workload domains. These workload domains can be secured with NSX for multi-tenancy protection. In addition, EVO SDDC Manager will provide Non-disruptive LifeCycle Automation to take care of infrastructure and software updates, just like EVO:RAIL does today. Pretty cool. I’m looking forward to seeing this in action.

Next, Yanbing discussed how to Extend your datacenter. Basically, what new things can be done to federate hybrid clouds (between your own datacenters or your datacenter and a public cloud). The first thing discussed was something called Content Library Automatic Synchronization and Yanbing demonstrated syncing VM templates between datacenters or vCloud Air. This is great, but to move live workloads, VMware introduced vCloud Air Hybrid Network Services to enable vMotion between hybrid clouds. Side note, I was part of a project that demonstrated an alpha version of something like this at EMC World a few years back, but, back then we didn’t have NSX. Last year, VMware announced long distance vMotion between customer data centers. But this year, in Yanbing’s words, “we have just witnessed history, cross-cloud vMotion”. Makes you think back to the first time you witnessed the original vMotion.

Finally, Yanbing discussed the benefits of Reach and vCloud Air’s capabilities. Disaster Recovery and Backup Services have been available for awhile to protect critical workloads. But now VMware announces availability of vCloud Air Object Storage as a service which is powered by EMC’s Elastic Cloud Storage. This is great news for customers as object storage becomes more and more of a requirement and one that can now be satisfied by expanding capabilities of VMware’s Storage and Availability Business Unit and vCloud Air.

There was more to the Keynote presentation but I chose to just focus this post on the Storage and Availability announcements and Yanbing’s presentation. As she walked off stage she yelled “Go VSAN!”… Awesome!